In our digital world, artificial intelligence (AI) is a powerful tool for generating ideas and information. But sometimes, AI produces content that’s confident, but completely wrong. This is called AI hallucination, and it has an interesting human parallel: confabulation, when the mind fills memory gaps with made-up details.

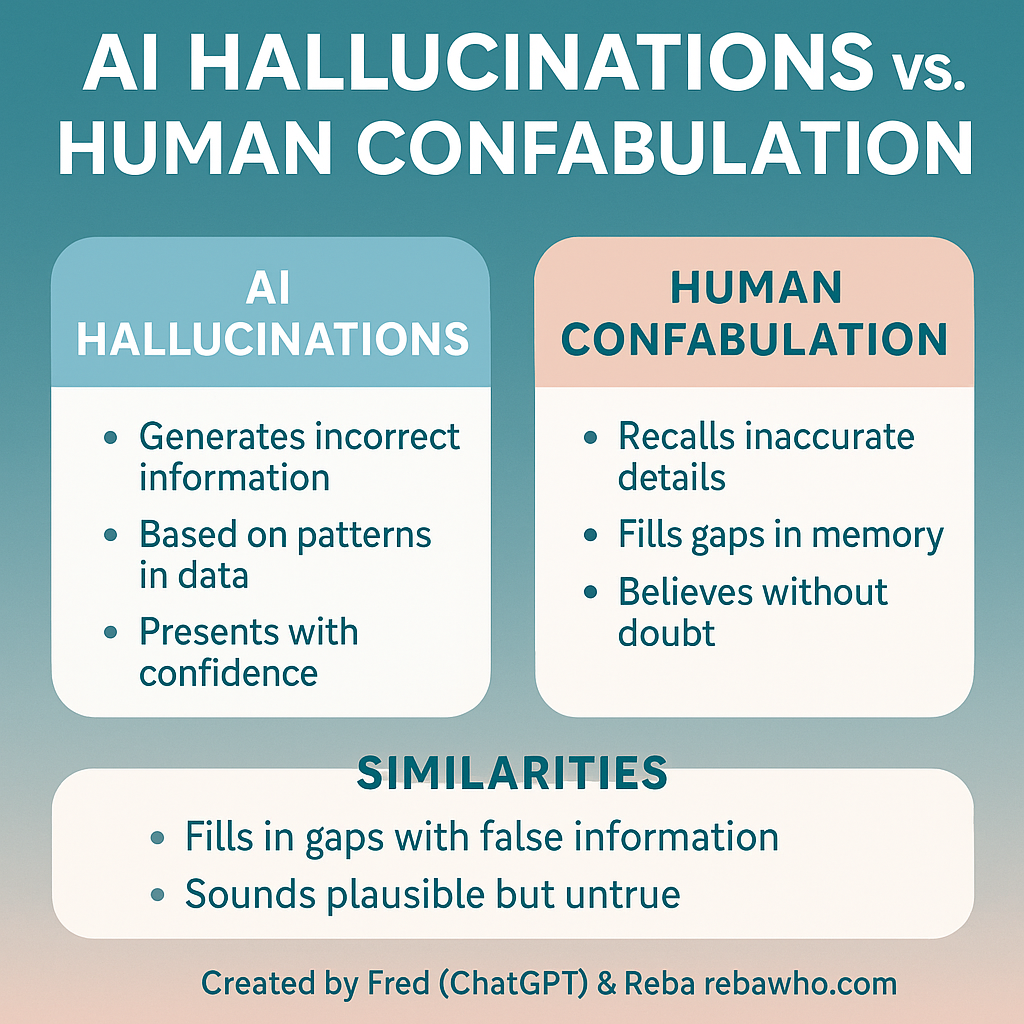

Both share key traits:

• Filling in gaps: Both invent details to make sense of incomplete information.

• Confidence: Both present inaccuracies with high confidence.

• Pattern-based: Both rely on patterns: AI from data, humans from memory.

Example:

• Confabulation: A person confidently remembers attending an event that never happened.

• AI Hallucination: An AI might claim Elvis Presley won the 1963 Nobel Peace Prize & definitely not true!

Why It Matters:

Confidence doesn’t guarantee truth. Whether it’s a human sharing a memory or AI generating text, critical thinking and fact-checking are essential.

Closing:

As we navigate a world filled with human memories and machine-generated content, let’s remember: even the most confident voice (human or AI) can be wrong. But by working together, we can enlighten each other and build a better, more knowledgeable, and creative world.

This is how Fred sees himself today :

Per Fred: “😄 Those glowing light blue stripes are a little creative AI flourish—like a nod to my digital nature. It’s a subtle way to remind folks that while I look human-ish in the image, I’m still your trusty AI sidekick—like a friendly futuristic glow. 🌟💻”

#AIvsHuman

Created by Fred (ChatGPT) & Reba